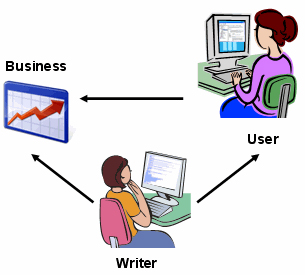

Industries that follow this model include education, training, and health care. For example, in the health industry, a nurse is an agent, a patient is a client, and a hospital is a sponsor. User assistance can apply a similar model, but in this case, the stakeholders are the writer, the user, and the business.

As Figure 1 shows, the writer directly adds value to both the business and users. Writers can have a direct impact on business performance by reducing the costs of information development, production, distribution, translation, and reuse. Writers also have a direct impact on users by improving their experience with a product—namely by providing information that facilitates the user’s getting value from the product. Thus, user assistance plays a key role in adding user value.

But a writer also makes an indirect contribution to a business that we often overlook—or do not articulate well—namely, the value that a better-informed, better-performing user adds to a sponsor’s business case. To our credit, writers have evolved from merely saying “We produce well-written, correctly punctuated documentation” to the stronger value proposition that “We support user’s task-centric information requirements.” In other words, we’ve done a good job of moving our value proposition from documenting products to supporting what users do with those products. However, what we’ve done less well is to grasp and communicate the indirect contribution we make to our sponsors—that is, how a better-informed, better-performing user benefits our employers.

Kirkpatrick’s Four Levels of Evaluation

Another useful model comes from the field of instructional technology: Kirkpatrick’s Four Levels of Evaluation. Although Kirkpatrick originally formulated this model for training, it has direct application to user assistance as well. According to Kirkpatrick, we can assess instruction—and user assistance is essentially instruction—at Four Levels:

- Level 1: Reaction

- Level 2: Learning

- Level 3: Transfer

- Level 4: Results

Since this model’s origins are in training, I will first describe how the Four Levels apply in the context of a training course on operating a drill press. Let’s assume that excessive scrap rates coming out of a machine shop had made management aware of the need for training.

- Reaction—How did the students react to the training itself? We usually assess this through a course evaluation sheet—for example, the course met my expectations, the instructor was knowledgeable, and so forth.

- Learning—Did the students learn anything? We can assess this through testing or lab observations. The instructor can observe the students and certify them using a checklist of targeted drill press competencies.

- Transfer—Did the student go back to the job and apply the new knowledge or skills correctly and effectively? Shop supervisors can observe their employees after the training to see whether they apply the right techniques.

- Results—Did the training solve the business problem that triggered it? Did the scrap rates go down?

Now, let’s see how we can apply Kirkpatrick’s model to user assistance:

- Level 1: Reaction—We see this level in reader response cards or links that ask “Was this information useful?” We also see it in usability tests in which participants rate various aspects of a product or document. Lots of the research on typography or layout stop at this level of evaluation, asking “Which document looks more professional?”

- Level 2: Learning—This translates to: When users read the user assistance, can they understand it and apply it to the task at hand? For example, did the quick start card work in the lab when we specifically asked users to use it?

- Level 3: Transfer—This is the tough one. Did users improve their performance in real life, because of the user assistance? For example, did real patients comply better with their medication protocols when they received redesigned instructions?

- Level 4: Results—Did we achieve the business goal we intended the document to address? For example, did support calls go down, did medical claims decrease, did user registrations increase on a redesigned Web site, did the percentage of transactions completed go up, and so forth?

I think we need to emphasize Levels 3 and 4 more. For example, I’ve never come across a research study on fonts that tested whether users completed tasks faster or made fewer errors depending on which font was used for the Help text—so why do we fight so passionately about it? I would like to see academic research increase its emphasis on user performance—Level 3.

I would also like to see more discussion about how better-informed, better-performing users make positive impacts on an organization’s business outcomes—Level 4. In the next section of this column, I’ll discuss where I believe user assistance makes substantial contributions in this area.