Mobup: The Emotional Aspects of Ubiquitous Computing Through a Camera Phone

Presenter: Matteo Penzo

Matteo Penzo![]() began his talk by sharing his personal conception of interaction frontiers: Considering users’ emotions and instincts is the new frontier and the challenge we must face in designing digital products.

began his talk by sharing his personal conception of interaction frontiers: Considering users’ emotions and instincts is the new frontier and the challenge we must face in designing digital products.

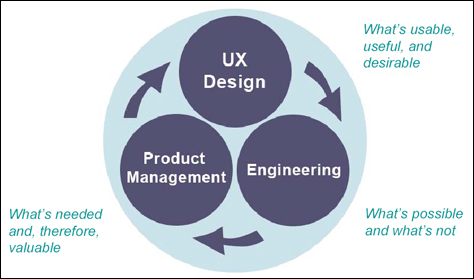

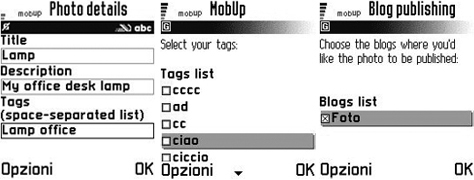

Mobup,![]() shown in Figure 1, demonstrates perfectly what Matteo means. Mobup is a small application that manages photo uploads to Flickr from a camera phone. He conceived of and developed it to meet the needs of bloggers in motion. A user can shoot a photo, tag it, and send it, using only his phone. A user can use various features of the phone in the process. For example, if a user has a Bluetooth® GPS (global positioning system) device, he can use geographical information when tagging photos.

shown in Figure 1, demonstrates perfectly what Matteo means. Mobup is a small application that manages photo uploads to Flickr from a camera phone. He conceived of and developed it to meet the needs of bloggers in motion. A user can shoot a photo, tag it, and send it, using only his phone. A user can use various features of the phone in the process. For example, if a user has a Bluetooth® GPS (global positioning system) device, he can use geographical information when tagging photos.

The World Around Our Screens

Presenter: Fabio Sergio

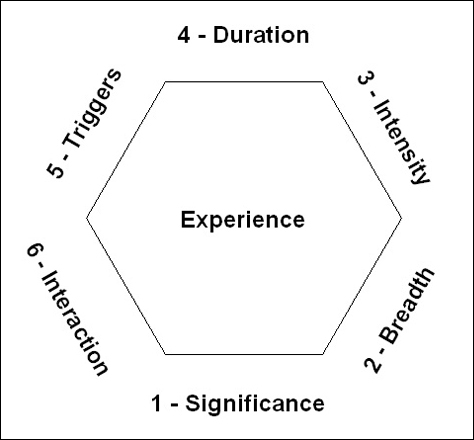

Nowadays, the GUI (Graphic User Interface) limits our physical interactions with the digital world, according to Fabio Sergio.![]() Starting with the idea of embodiment—physical and social phenomena unfolding in real time and space as part of the world in which we are situated, right alongside and around us—Sergio firmly hopes that the Internet can become “the Internet of things.” Interactions with digitally enhanced artifacts can be enriched by people’s deep emotional relationships with things, or their emotional affordances. Figure 2 shows two examples of “the Internet of things,” with which it will be very easy for users to interact emotionally:

Starting with the idea of embodiment—physical and social phenomena unfolding in real time and space as part of the world in which we are situated, right alongside and around us—Sergio firmly hopes that the Internet can become “the Internet of things.” Interactions with digitally enhanced artifacts can be enriched by people’s deep emotional relationships with things, or their emotional affordances. Figure 2 shows two examples of “the Internet of things,” with which it will be very easy for users to interact emotionally:

- Cellular squirrel prototype—a device, with a Bluetooth peripheral inside, for managing mobile phone conversations

- Nabaztag—a small interactive rabbit, with a Wi-Fi connection to the Internet, which is an avatar rather than an agent.

The original meaning of the term avatar is embodiment, which fits Nabaztag perfectly. Nabaztag is one of the most fully developed avatars available on the Internet.

Such new objects—even simpler ones—can become agents that convey, transmit, and distribute information. They can communicate with users and play an important role in their decision-making processes. In the future, these new agents will provide user interfaces for our mobile phones or PDAs (Personal Digital Assistants). How might our interactions with these devices be?

The new Wii™ controller from Nintendo®, shown in Figure 3, provides an illuminating example. It is a peripheral that offers a better way of interacting with a video game console. It has sensors that can recognize a user’s movements and responds to gestures and the playing of sounds. Beyond the screen, interactions through such a device could take advantage of new kinds of user interfaces.

Emotion in Human-Computer Interaction

Presenter: Christian Peter

Christian Peter![]() works for the Human-Centered Interaction Technologies department of the Fraunhofer Institute for Computer Graphics

works for the Human-Centered Interaction Technologies department of the Fraunhofer Institute for Computer Graphics![]() (IGD), in Rostock, Germany.

(IGD), in Rostock, Germany.

How is it possible to interact with computers so interactions take into consideration a user’s emotions? First, to create a satisfying interaction, it’s necessary to focus on the user, to pay attention to his emotions, and to create a comfortable situation that lets interactions develop in the best way. People show their emotions through their facial expressions, through the intonations of their voices, through gestures, through their posture, and through physiological changes. So where do emotion-recognition technologies stand today?

Researchers have investigated the physiological aspects of emotional expression, which are already being used in real applications—for instance, biofeedback. Recognition of the visual expressions of users who are in motion is still difficult, but researchers are conducting laboratory experiments to solve this problem. At present, voice analysis is still the most difficult aspect of this research, but even in this case, there have been positive results in laboratory experiments. The interpretation of gestures and posture analysis are both promising, but there has been less research in these areas.

However, to be useful in interaction design, emotion-recognition technologies must be minimally intrusive—or better, not at all intrusive—easy to use, accurate, valuable to customers, easy to apply, robust, and reliable.

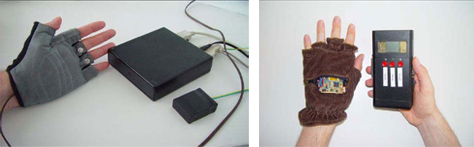

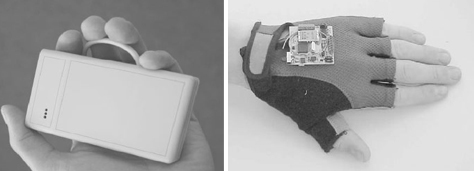

At the moment, IGD is testing a sensor system in a special glove that collects data about physiological responses that reflect a user’s emotions. As shown in Figure 4, the first glove still had wires, but now the glove is wireless and has a memory card and a microcontroller. Currently, the sensors in this glove can measure heart rate, skin conductivity, and skin temperature. The next generation of the glove, shown in Figure 5, will be lightweight and more aesthetically designed.

This system could have very interesting applications in laboratory tests and for some tasks that users perform in their jobs, but also for education and games.

For the present, emotions are not taken into account in our interactions with technological systems, but they can be. Certainly, this is essential aspect of our enriching human/computer interactions.

Virtual Assistant: Work or Fun?

Presenter: Leandro Agrò

Leandro Agrò![]() proposes that we shift from the idea of a graphical user interface (GUI) to an emotional user interface (EUI) and told us that we can bridge that gap using the technological capabilities we already have. He said we must strive for a complete integration of the necessary technologies. Agrò’s research on virtual assistants is a big step forward toward this goal. Figure 6 shows two frames from his demo videos.

proposes that we shift from the idea of a graphical user interface (GUI) to an emotional user interface (EUI) and told us that we can bridge that gap using the technological capabilities we already have. He said we must strive for a complete integration of the necessary technologies. Agrò’s research on virtual assistants is a big step forward toward this goal. Figure 6 shows two frames from his demo videos.

In Agrò’s opinion, it is not essential that a virtual assistant understand every element of a user’s communication. However, it is important that an assistant be proactive in managing a situation, even though it may not necessarily solve a user’s problems.

Social/Cultural Cues for More Effective Human/Machine Interaction

Presenter: Dario Nardi

Dario Nardi,![]() PhD, teaches at the University of California at Los Angeles and is a founding faculty member of its new program in Complex Human Systems. He teaches the modeling and simulation of social systems.

PhD, teaches at the University of California at Los Angeles and is a founding faculty member of its new program in Complex Human Systems. He teaches the modeling and simulation of social systems.

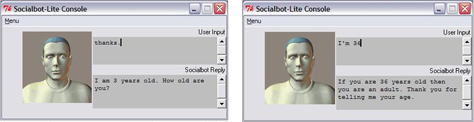

Nardi spoke about his project SocialBot,![]() which began in 1997 with the goal of improving natural-language-parsing engines and the efficiency of chatbots, or chat virtual agents. Figure 7 shows the SocialBot user interface. In 2002, Nardi created a conversational agent that had almost 4000 behaviors. A year later, he improved the chatbot by giving it a changeable identity and made it possible for other people to program it. The project’s underlying purpose was to validate the importance of socially relevant behaviors in conversations.

which began in 1997 with the goal of improving natural-language-parsing engines and the efficiency of chatbots, or chat virtual agents. Figure 7 shows the SocialBot user interface. In 2002, Nardi created a conversational agent that had almost 4000 behaviors. A year later, he improved the chatbot by giving it a changeable identity and made it possible for other people to program it. The project’s underlying purpose was to validate the importance of socially relevant behaviors in conversations.

Adding some personal information to a message can improve the quality of an interaction—for example, some clues about a person’s status or recalling his mother’s favorite color. As a matter of fact, adding only small details to a message can dramatically contribute to a more satisfying interaction—a more human communication. The aim is to create virtual agents that have practical abilities, in order to achieve a more natural, pleasing, and useful interaction.