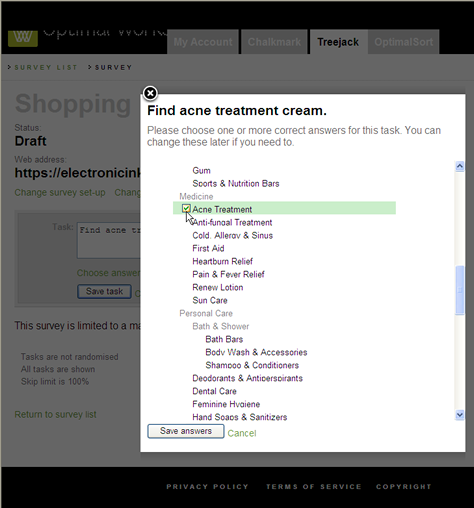

Testing Findability with Chalkmark

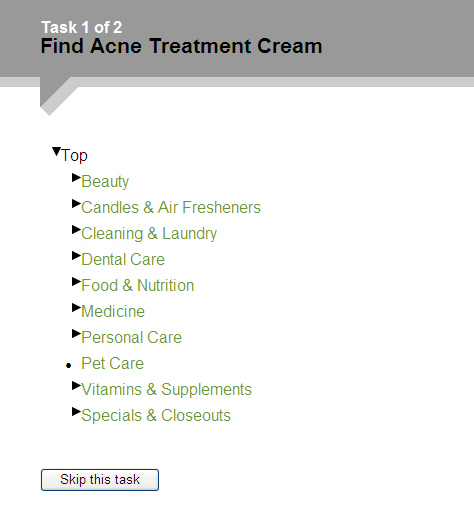

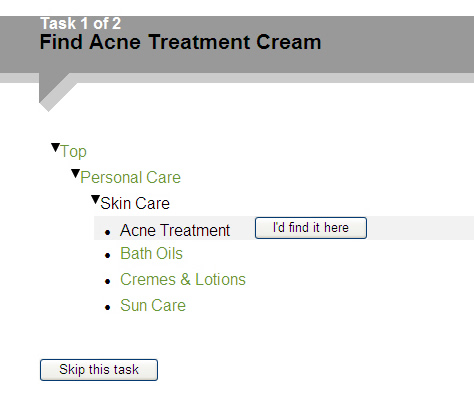

An online, unmoderated testing tool, Chalkmark lets you test findability in a Web application design. Chalkmark gives participants a task such as Find special offers on cruises and presents a screenshot, as shown in Figure 1. Participants click links in the screenshot where they think they would find the information they need. Then, Chalkmark presents the next task and the next screen. The test results are heatmaps, showing where participants clicked during each task. The heatmaps show concentrations of clicks and how many participants clicked each area of a screen.

Chalkmark is ideal for testing wireframes and early design concepts. Because each test task involves clicking a static screenshot, the only information Chalkmark captures is the participants’ clicks on a single screen. While you can use closed card-sorting tools and tree-testing tools like Treejack to test an information hierarchy in isolation, Chalkmark lets you evaluate an information architecture within the context of a page design.

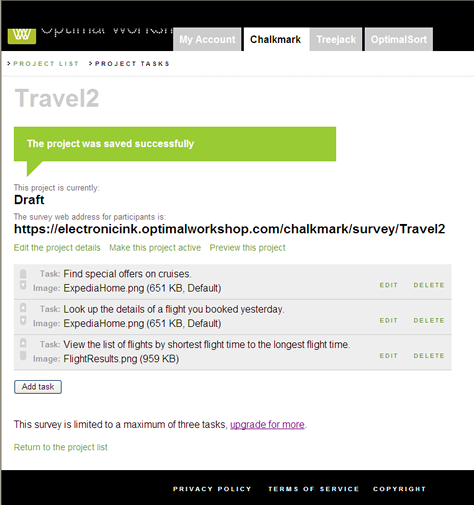

Setting Up a Chalkmark Study

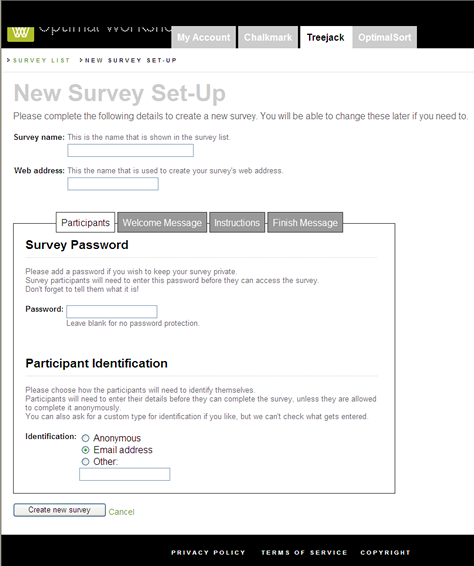

It is fairly easy to set up a study with Chalkmark’s simple user interface. Once you’ve named the study and set up a welcome message, instructions, and a concluding message, you can add tasks and select images to present with the tasks, as shown in Figure 2. A minor weakness of the setup user interface is that it shows only the file names for the images accompanying the tasks. Thumbnails would be helpful in identifying the images you’ve chosen for each task.

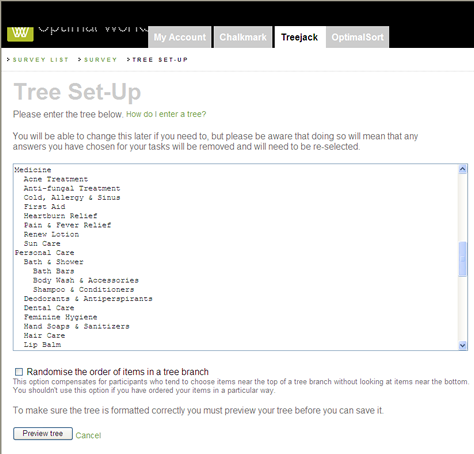

A problem throughout both Chalkmark and Treejack is the lack of online Help. While that is less of a problem with the relatively simple and intuitive setup user interface, because this is a new tool, information about how to create a study and get the most out of this technique and other tips would be helpful.

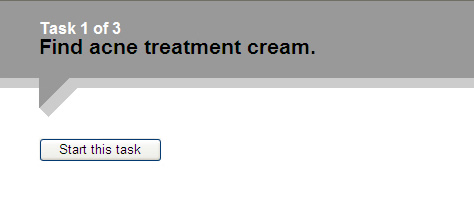

Testing with the Participant User Interface

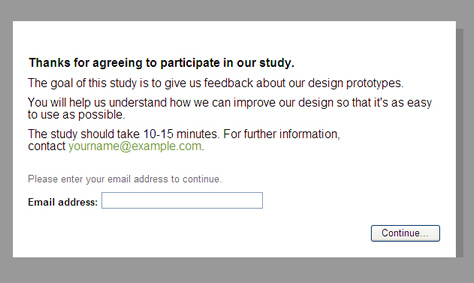

Once you’ve created a study, Chalkmark provides a link for you to send to participants. When participants click the link, the welcome screen shown in Figure 3 appears, displaying a brief explanation of the study’s purpose. You can either ask participants to provide an email address or another type of identification or make the study anonymous. You can optionally require a password on this screen, too.

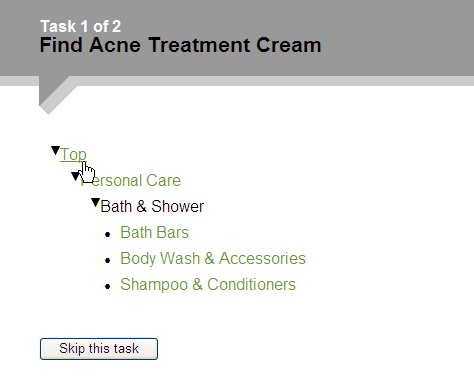

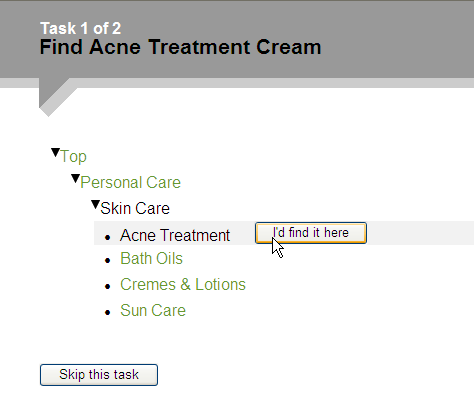

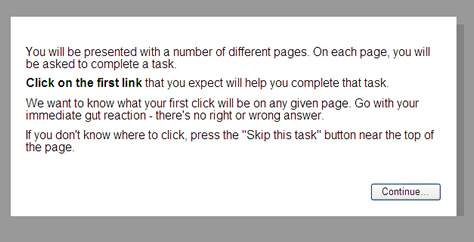

Since there is no moderator, clear instructions are extremely important to ensure participants know what to do. Unfortunately, the instructions in both Chalkmark and Treejack are text only, and they offer little capability for formatting the text, as you can see in Figure 4. Participants may skim over this dull-looking text, assuming they can just figure it out as they go along. Example images or illustrations would be more helpful in explaining a test concept and give participants a better idea of what to expect next. Unfortunately, once participants leave the instructions page, there is no way to view the instructions again or any other Help.

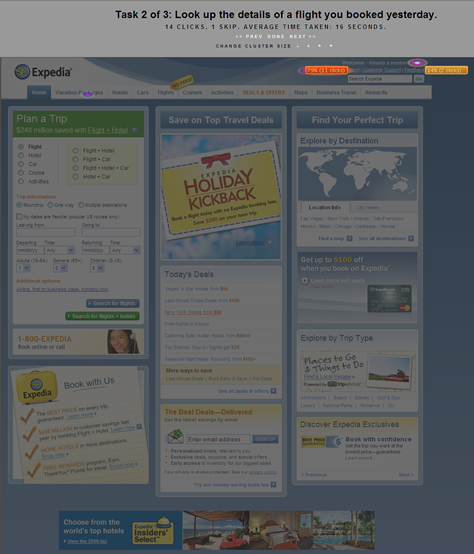

Fortunately, the task screens in Chalkmark are extremely simple, with a task description appearing above a screenshot and a link to skip the task. The header clearly shows a participant’s current location in a study, with the current task number and total number of tasks.

After reading the instructions for a task, participants click links in a screenshot they think would let them accomplish that task. Each click displays a message page, thanking the participant and indicating the next task is loading. I have found that some participants are surprised when their first click immediately ends the task and starts another one. They expect their clicks to take them to another page where they can continue the task. Some have said this fails to give them any sense of whether they’ve gotten the task right or wrong.

Unfortunately, unlike most online card-sorting and usability-testing tools, Chalkmark provides no method of getting additional feedback from participants, either by letting them make comments or through survey questions. The first click on each static screenshot is all that Chalkmark collects, which makes it a very simple, focused tool, but also a very limited tool.

Viewing the Test Results for a Chalkmark Study

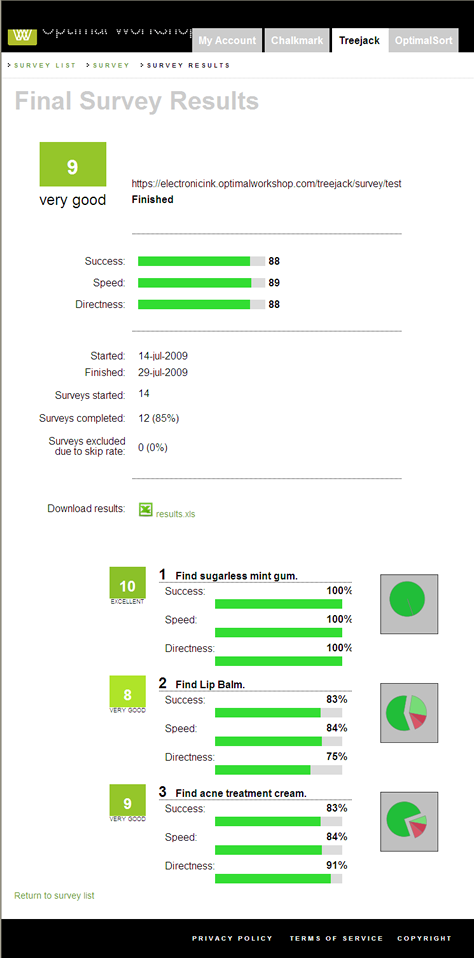

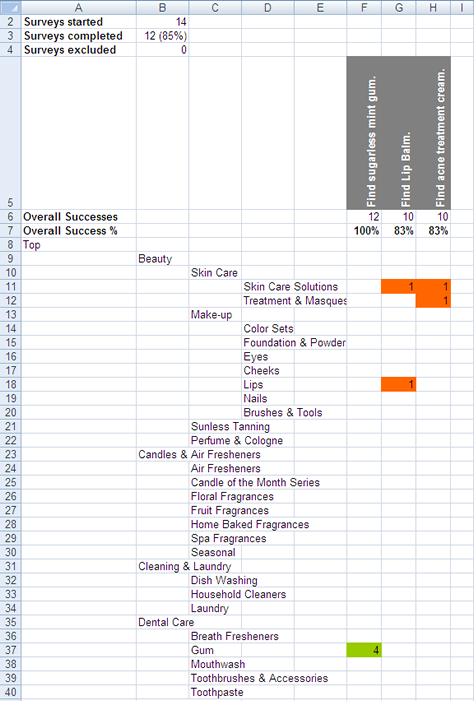

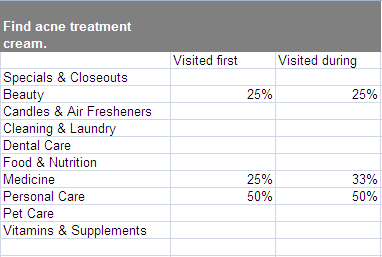

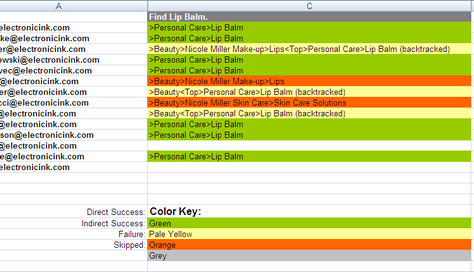

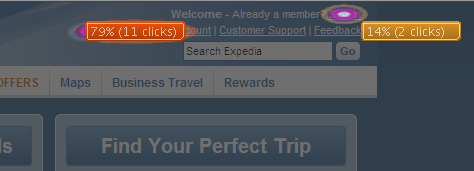

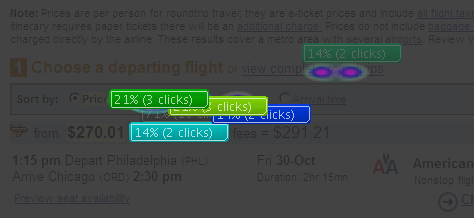

The test results for each Chalkmark task appear on click heatmaps like those shown in Figures 5 and 6. Hotspots show where participants clicked. Statistics boxes for areas that received multiple clicks show details about the number and the percentage of clicks. These visualizations clearly show whether participants clicked the correct areas or, if not, where else they expected to find things.

The heatmaps suffer from a few technical problems. For example, sometimes the statistics boxes appear directly over the hotspots or on top of one another, making it difficult to see the hotspots, what links participants clicked, and any statistic boxes they overlap, as you can see in Figure 7. It would be helpful if there were a way to drag these boxes out of the way.

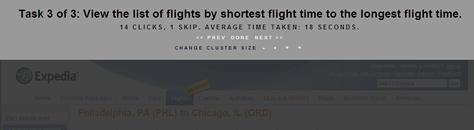

There are controls that let you change how Chalkmark clusters clicks in heatmaps, as well as the size of clusters, but the meanings of those settings and how Chalkmark clusters clicks are not clear. Some explanation would be helpful, but again, Chalkmark provides no instructions or Help explaining how to interpret the results.

As shown in Figure 8, the header for the heatmap shows the total number of clicks, the number of participants who skipped the task, and the average time participants took to complete the task. As with any unmoderated test, a single participant’s being interrupted during a task could easily throw off the average task time. Therefore, to provide more accurate task times, it would be helpful to see a list of all participants and their individual task times and be able to eliminate outliers.

The heatmaps are obviously something you would want to use in a report or presentation, yet Chalkmark provides no specific function for downloading the test results or saving the heatmaps. You have to take your own screenshots to save them.

My Overall Assessment of Chalkmark

Because of Chalkmark’s simplicity, it is ideally suited for straightforward findability tests. Within the context of a page design, you can determine whether a page’s information hierarchy and labeling let participants find things.

Compared to other online, unmoderated testing tools, Chalkmark is much easier to use—both in terms of setting up a study and analyzing the results. It is far cheaper and more flexible in its pricing plans, too.

However, the downside of this simplicity is that Chalkmark is very limited in what it provides. Seeing only participants’ first clicks in a heatmap fails to reveal the other places in a screenshot participants considered clicking. Plus, Chalkmark does not show whether participants would find what they were looking for once they’ve clicked a link on the first page. You cannot use Chalkmark for test tasks requiring participants to click several things on a page—for example, clicking or hovering to open a navigation menu before clicking a link. The fact that Chalkmark cannot gather any other type of user feedback such as comments or survey responses is very limiting.

Despite these limitations, Chalkmark has its place as a simple, quick, and inexpensive evaluation tool.