Statistical Sampling

In general, our user research activities involve working with a small subset of our overall audience of users, to

- gather information about a particular topic

- test users’ response to some feature of our design solution

- measure an increase or decrease in the efficiency of performing a certain task

- or some other similar goal

The size of the entire audience prohibits us from involving all of our users in our research activities.

Our first step is to select our sample from the total population of users. If we’ve done that successfully, our sample should reflect, as closely as possible, the composition of the full user population in the user characteristics that matter for our research.

For example, let’s say that we’re measuring the completion times for a set of tasks in a Web application. We should first think about the user characteristics that might make someone more or less able to complete the tasks. These characteristics might include manual dexterity—for mouse control—visual acuity or impairment, language comprehension skills, etcetera. We’re less interested in whether users are left-handed or right-handed, male or female. So when selecting our sample, we need to ensure that it represents the proportion of users with vision impairments, for example, rather than left-handedness. We refer to this attribute of the sample as its representation of the user population. In other words, our sample should be representative of the entire population.

There are a few other things we need to consider. All members of our overall user population should have an equally likely chance of our selecting them for our sample. This factor of sampling is known as randomness. So-called convenience samples—where we choose participants based on the fact that they’re close to us—obviously limit the likelihood of non-proximal users participating in our study, so don’t satisfy the requirement for randomness.

Lastly, our sampling technique should ensure that selecting one person has no affect on the chance that we’ll select another person. This factor is known as independence and is the same principle that describes the probability that a coin toss will result in a head or tail showing. The chance of getting a head on a single coin toss is half, or 50%. The chance that two heads will appear in a row is ½ x ½ = ¼. However, if our first coin toss shows a head, the chance that the second toss will show a head is back to being half, because the two events—our two coin tosses—are independent of one another. (Bear this in mind the next time you see a run of five black on a roulette table. You might hear someone say, “The next one must be red; the chances of having six black in a row are really low.” But really, the odds are fifty-fifty that the next one will be black.)

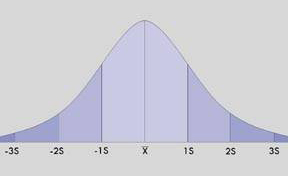

So, once we have selected a random, independent, representative sample, we carefully conduct our user research—survey, usability testing, etcetera—then measure our test results.