Leveraging User Data in Content Delivery

Companies are increasingly making more imaginative use of actual user data to improve their user experiences. Famously data driven, Google recently determined which shade of blue![]() was most effective in encouraging users to click links by testing different shades with huge numbers of users. Netflix ran a competition to improve the accuracy of its algorithm for predicting the movies users would like. And, of course, Amazon has its review and recommendation features, which let customers rate product reviews and reduce the extent to which people can game the system. All of these data-driven approaches require large numbers of users to provide quantifiable user data. Leveraging user data through using dynamic, data?driven algorithms enables sites to deliver customized content to users, which they’ve tailored more flexibly to a particular user’s needs. Drawing on the collective behaviors of users lets us create better user experiences—and, in most cases, increases sales as well.

was most effective in encouraging users to click links by testing different shades with huge numbers of users. Netflix ran a competition to improve the accuracy of its algorithm for predicting the movies users would like. And, of course, Amazon has its review and recommendation features, which let customers rate product reviews and reduce the extent to which people can game the system. All of these data-driven approaches require large numbers of users to provide quantifiable user data. Leveraging user data through using dynamic, data?driven algorithms enables sites to deliver customized content to users, which they’ve tailored more flexibly to a particular user’s needs. Drawing on the collective behaviors of users lets us create better user experiences—and, in most cases, increases sales as well.

That we would begin to embed knowledge about UX design in products was inevitable. Most processes become increasingly automated as our understanding of them grows. As UX professionals, we can either accept this, adapt, and try to influence the outcome or be steamrolled by it. As Knuth pointed out, science is what we understand. If we can automate some of what we understand, then why not automate it and focus on what are arguably the more interesting and challenging aspects of UX design?

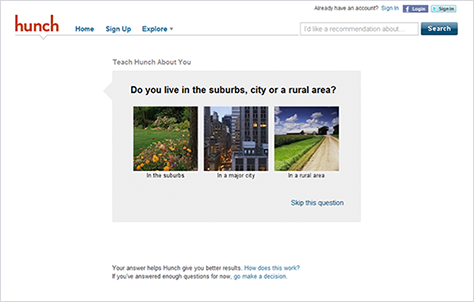

User researchers elicit user data that informs both UX design and the development of the algorithms that deliver the types of customized, data-driven content I’ve described, focusing the entire design and development process on actionable user data. To design effective algorithms, it is essential that algorithm developers have a deep understanding of both a site’s subject matter and how people might use—or misuse—a site. Thus, integrating some aspects of user research into algorithms can have a huge impact on their effectiveness. UX designers must be able to design products that themselves elicit relevant data from users—the data that feeds the content-delivery algorithms.

We can view UX designs that use dynamically generated content in terms of Big-Static versus Little-Dynamic design decisions. For example, in a Web application Big-Static design decisions encompass the overall structure of a page, the broad type of content a site presents, and a site’s information architecture. We make these types of decisions infrequently, and they set the context for Little-Dynamic design decisions. The Little-Dynamic design decisions are those that we can delegate to algorithms. They dictate where and how we deliver dynamic, data-driven, and user-generated content. This combination of Big-Static and Little-Dynamic design decisions helps to ensure that users experience the predictability of a consistent site design together with the delivery of dynamic content that is relevant to their needs.

Customizing content in this way builds toward a Web-3.0, or semantic Web, view of the world: a world view that lets us provide much more relevant user experiences based on a richer understanding of our users. One of the major obstacles the semantic Web faces is the construction of detailed ontologies—that is, knowledge structures that define the relationships between different terms. This is a huge challenge. Ontologies generally exist for only specific problem domains—for a very good reason: meaning significantly overlays much human knowledge—meaning that derives from culture and human experience. Thus, capturing knowledge in a way that is useful outside of well?defined boundaries is extremely difficult.

In real?world problem domains, the use of heuristic approaches is far more effective. These techniques are not especially rigorous and do not provide detailed information on particular topics. However, they do provide data that, in aggregate—over thousands or millions of users—provide real value. In many ways, a heuristic approach is a smarter approach, because system smarts then derive from the user base in a model that is akin to that of parallel processing: lots and lots of small decisions and fragments of knowledge, in aggregate, can produce a similar result to a more complex, centrally defined model.