What does this mean for designers of user experience? It means our tools for interaction are changing. The revolution the graphical user interface brought us will pale in comparison to the transformative change in user experience that’ s on its way.

Complex Pattern Recognition

It’s no secret that computers are capable of scouring large data sets and quickly and efficiently recognizing complicated patterns in them—ones that humans would have difficulty discovering, no matter how much time they had to study them.

Video and Picture Data

While we can easily identify something as complex as the face of a familiar person, we’d probably be stumped if asked to pick someone out in a large crowd at a sporting event. Security systems at casinos, border crossings, and even the Super Bowl have used facial recognition software for years, with varying degrees of success. In such systems, cameras capture images of human faces, then software compares the features of those faces to photos in a database and finds matches.

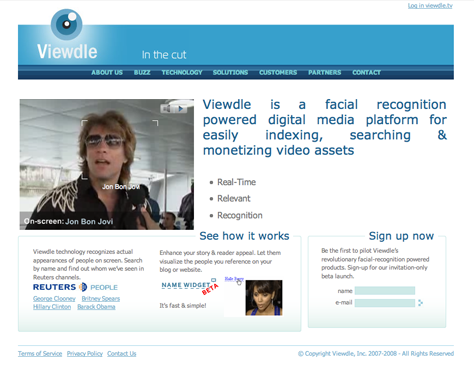

While the need for security was the driving force behind the development of this kind of software, we are beginning to apply such technology to other purposes, with intriguing results. For instance, Viewdle created its facial recognition software to index digital video, allowing owners of DV content to extract metadata from their libraries without manually reviewing and tagging the video recordings. So, if you have hours of uncatalogued digital footage of news and entertainment shows, Viewdle could review and tag your video with people’s names—perhaps enabling you to find and even sell those rare Jakob Nielsen sightings you have tucked away on your hard disk to the highest bidder.

There have been significant advances in the recognition of complex patterns in images from other types of software as well. Photosynth, a Microsoft Live Labs product, is capable of picture analysis that can piece together related photos in three-dimensional space. For example, the program can identify the relationships between various shots of a building, and no matter the data source—whether from an amateur digital snapshot, mobile phone picture, professional photograph, or high-res film scan—can reconstruct a multidimensional structure by overlaying photos, one atop another. At the March 2007 Technology, Entertainment, Design (TED) conference,![]() Blaise Aguera y Arcas, one of the creators of Photosynth, demonstrated

Blaise Aguera y Arcas, one of the creators of Photosynth, demonstrated![]() its capabilities to the crowd’s delight, showing a complete rendering of Notre Dame cathedral composed of thousands of photos the Photosynth software compiled automatically from Flickr.

its capabilities to the crowd’s delight, showing a complete rendering of Notre Dame cathedral composed of thousands of photos the Photosynth software compiled automatically from Flickr.

With both Viewdle and Photosynth, we are beginning to see patterns of photo and video data evolving into a language that is understandable by computer software. Visual information saturates our daily lives, through body language, facial expressions, environmental indicators, and other signs and symbols we’ve come to recognize. We look at a gray sky and hypothesize that it will rain. We see cracks in a foundation and worry about a building’s structural integrity. We see a frown and wonder what we did wrong. For the present, all of these subtle visual cues, that are plain as day to us, remain hidden knowledge to computers. However, that is beginning to change, and as a result, input devices like the video camera on your notebook computer or mobile phone may become not merely tools for capturing and broadcasting pixels, but methods of interaction that enable much richer user experiences.